The Problem With AI Text Generation

February 8, 2026 | By David Allen, Ph.D.

Generating text with an AI is becoming more prevalent every day. Give an AI instructions about your topic, length, citation type and other parameters, and Prest-Chango—Abara Cadabra, your paper, journal article or book just pops into existence.

To many, who don't have time or lack writing skills, don't care that the AI's stale writing lacks any human touch or are simply lazy, using AI seems a better choice than writing from scratch. Whatever the reason, people who turn to AI to write or correct in part or whole their written work, can at best wind up not getting into the school of their choice or receiving failing grade or at worst permanently ruining their reputation. This is because teachers, peer-reviewed journals and scholarship, college/university acceptance and dissertation committees and others view AI-generated text as a scourge of dishonest cheating.

To combat that scourge, they turn to AI detectors such as Turnitin and GPTZero to tell them if the work they review was written in part or whole by an AI.

AI Detectors

AI detector algorithms work by finding patterns in word choices, sentence structure and length, semantic meaning, and more. They compare those patterns to patterns found in AI-generated text and human-generated texts. Based on the number of patterns within the document that match AI-generated patterns, many detectors return a likely percentage of the text that is AI-generated, AI-generated and AI-refined, human-written and AI-refined, and human-written.

AI Detectors are Inaccurate

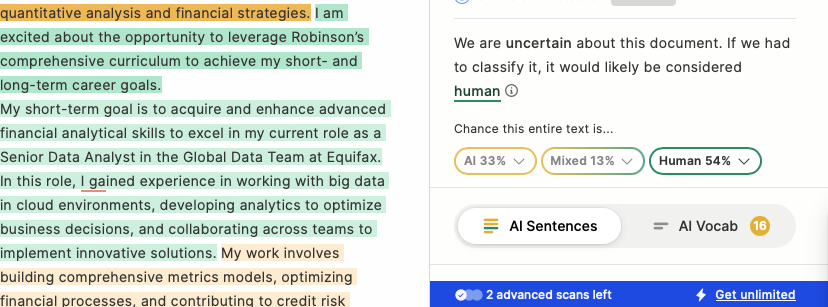

That these detectors are highly fallible is no secret. Many articles and videos on the net report results of personal tests. In fact, one called the American Declaration of Independence to be AI-generated. I experimented myself by feeding an AI detector AI-generated text and my own writing done without AI help. They both scored as AI-generated. And I assure you that I'm not a clanker!

Furthermore, submitting identical text to multiple detectors reveals that scores vary widely. Also, AI detectors can hallucinate by inadvertently providing false conclusions.

Yet teachers and reviewers still rely on these faulty detectors when judging application essays and statements, grading student work, or reviewing articles for peer-reviewed publications.

By way of avoiding "getting caught," the owners of AI-generated texts often seek editors to "humanize" their documents, expecting that it is a simple process of changing a word or two, here and there. As one of those editors, I know that it is not that simple. In fact, in my experience, humanizing AI-generated text is often more difficult than reducing a manuscript's word count by 50%. To understand why it is so difficult, one must first understand how normal editing is done, how these detectors work, and then compare the two.

Normal Editing

During a usual, human-conducted editing process, the editor uses knowledge and skills garnered through study and experience to seek issues in word choice (semantic meaning), punctuation, sentence/paragraph structure (syntax), general wordiness, and more. The resulting text will be a well-written, human-created document which adheres to the rules of the language's grammar, and is concise, easy to understand, and mostly error-free—we are human after all.

AI Editing

To "humanize" text, editors must change more than a few words or rewrite a few sentences. The first step is to feed the document to an AI detector that gives us a sentence level analysis of the text. Then, to produce a well-written, human-sounding document we change inhuman patterns, over-used and improper word choices, entire sentences and paragraphs as well as paragraph order, testing each change against the AI detector to see if the edit is successful. Yet changing one word that humanizes one sentence can cause other sentences and even whole paragraphs that had been rated as human-generated to be rated as AI-generated, forcing a whole new set of edits. This is complex, time-consuming work.

The AI Editing Caveat

Though one or more detectors may rate a document as 0% AI-generated, another (or even the same detector) could report the same document as 50% AI-generated. As a result, humanizing editors cannot honestly guarantee any specific percentage of AI-generated material remaining. This is why my contracts contain a clause stating that there is no guarantee of a 0% AI-generated response even though I try for that result.

Best Advice

So, my advice to writers is: NEVER USE AI TO GENERATE ANY TEXT.

In fact, I advise not using Grammarly (except for spelling and punctuation repair) because it's AI suggests phrasing, word choice, etc., putting difficult to remove AI-generated material into a document. That said, using AI as a guide that suggests topics for inclusion, provides information on relevant articles, or creates an outline is perfectly acceptable. However, it is far easier, much safer, and significantly cheaper overall to write all the text yourself.

Remember: No matter how poor your writing, no matter how much you don't like to write, editors like me can help you whip your writing into shape. It's what we do.

Need Help With Your Human-Written Content?

Let me help you transform your writing into polished, professional work—no AI needed.

Get in Touch